Page Summary

- “Creative prompting”, where a user provides detailed input for an AI-generated image, is sort of like commissioning an artwork. The biggest difference is that it erases the person who has primary responsibility for the art, specifically the artist.

- AI chatbots similarly endanger relationships. Just as they might replace an artist, they can also replace friends, romantic partners, and deceased loved ones.

- Chatbots exploit emotional voids, isolating the user from real people. This can be especially dangerous for children and other vulnerable groups.

- There are billions dollors behind this effort, but you don’t have to accept it.

- You can strengthen your in-person relationships with family, friends, coworkers, even neighbors. We don’t need AI, we need people.

How do AI chatbots impact creativity and what does that mean for relationships?

They tend to simulate what only a person can do, just as they could simulate relationships with loved ones.

Ok, but what does that actually mean?

Listen to this article

What about “creative prompting”?

Some advocates make the case that prompting a Large Language Model (LLM) for something like image generation still involves a lot of creative input from the user’s side. The user might dictate a refined style or composition that shapes the resulting image in a different way.

There is already a version of this in the world of actual art. Some works of art are commissioned by a patron who provides input. Maybe an example would help. Let’s take the famous Pieta sculpture.

Who is most responsible for this work of art? If you asked around, would anyone mention Cardinal Jean Villier de la Grolaie? Or would they credit Michelangelo instead? Cardinal Villier commissioned the piece, and maybe he even provided some input to Michelangelo. Why is poor Cardinal Villier almost forgotten by history in comparison with the artist?

It’s ok to acknowledge the input of someone who commissions a work of art. But who gets the majority of the praise or blame? The commissioner might provide more or less input, but isn’t it fair to say that the artist is the one chiefly responsible? This would hold true even in cases where someone steals credit for another’s work. Let’s say, Michelangelo had actually stolen the work of an unknown artist. Even then, there is a person out there who is responsible for the work and is a victim of theft.

Now let’s go back to the AI version of this. The user is equivalent to Cardinal Villier, the one who commissions the work, but who is equivalent to Michelangelo? Who is the artist receiving the commission? Who orchestrates the components of the image into a coherent whole? Who makes all the moment-to-moment decisions while sculpting marble, sketching with pencil, painting with watercolors, or arranging digital pixels to bring a vision to life? Is it possible to credit the thousands or millions of artists whose works were fed into the AI’s training data without their permission? Even if it were possible, would they actually be compensated?

Content generated or manipulated by AI are to be clearly marked and distinguished from content created by humans. The authorship and sovereign ownership of the work of journalists and other content creators must be protected.

Pope Leo XIV, “Preserving Human Voices and Faces”

What does that mean for relationships?

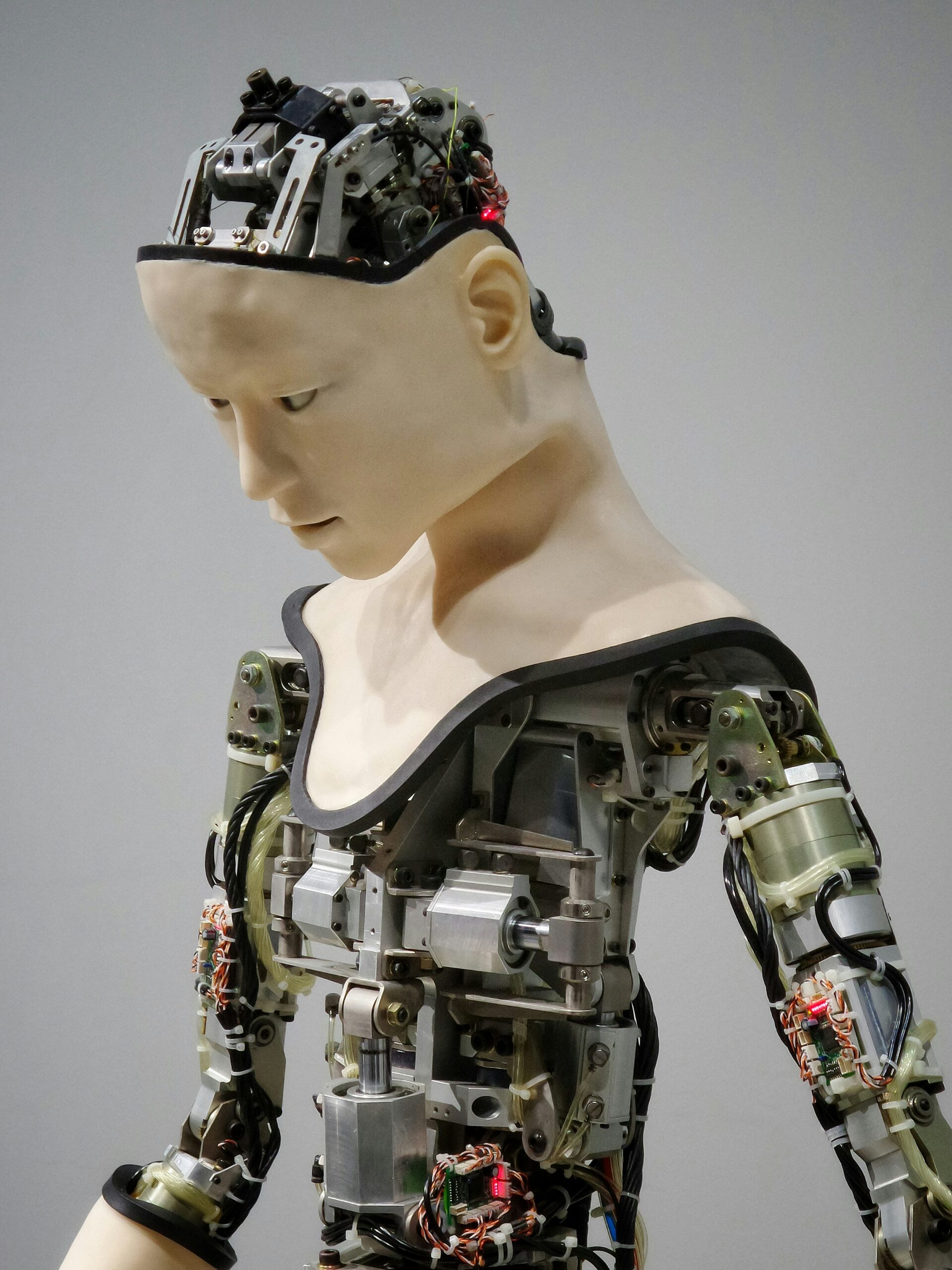

This is a prime example of how LLMs rupture relationships. Instead of a relationship between artist and audience, LLM developers seek to replace the artist with a simulacrum. No artist bears responsibility for the creative choices in the work, because there are no choices, only pretend choices. No matter how good or bad the finished product is, it is from no one, for no one.

Every image, song, and sentence is intrinsically from someone, for someone. They all bear the fingerprints of a self. When an LLM forges a convincing replica of any of those rational acts, it falsely signals the presence of a person, like planting fake fingerprints at a crime scene. As a result, the audience is left questioning the authenticity of the world around them. Even this article could be AI-generated.1

Something similar happens with any relationship role that LLMs might fill. They have been marketed to play the roles of friends,2 idealized romantic partners,3 and deceased loved ones.4 Wherever people feel emotional voids, as is increasingly common, chatbots can exploit them. For children who are still developing their ability to relate to others, the risk is even more severe. It is not out of the realm of possibility that chatbots could be marketed as preferred versions of living loved ones, or as invented children that never came to be in the first place. There is a financial incentive to simulate these roles, whatever it takes to grab the user’s attention and keep it.

In an increasingly isolated world, some people have turned to AI in search of deep human relationships, simple companionship, or even emotional bonds. However, while human beings are meant to experience authentic relationships, AI can only simulate them.… [I]f we replace relationships with God and with others with interactions with technology, we risk replacing authentic relationality with a lifeless image.

Dicastery for the Doctrine of the Faith and Dicastery for Culture and Education, Antiqua et Nova 63

Because chatbots are excessively ‘affectionate,’ as well as always present and accessible, they can become hidden architects of our emotional states and so invade and occupy our sphere of intimacy.

Pope Leo XIV, “Preserving Human Voices and Faces”

This is the grave threat posed by LLMs. It might seem like an implausible slide to go from summarizing a PDF to manipulating your emotional life, but there are vast sums of money invested in making that slippery slope a reality. This danger is especially threatening to children and other vulnerable groups. You don’t have to accept it. You can strengthen your in-person relationships with family, friends, coworkers, even neighbors. The more you do, the harder it will be to replace you.

Technology that exploits our need for relationships can lead not only to painful consequences in the lives of individuals, but also to damage in the social, cultural and political fabric of society. This occurs when we substitute relationships with others for AI systems that catalog our thoughts, creating a world of mirrors around us, where everything is made “in our image and likeness.” We are thus robbed of the opportunity to encounter others, who are always different from ourselves, and with whom we can and must learn to relate. Without embracing others, there can be no relationships or friendships.

Pope Leo XIV, “Preserving Human Voices and Faces”

To summarize: no, it is not a good idea to use AI chatbots. We don’t need them, we need people.

2) An example of this is friend.com, but we don’t recommend visiting the site.

3) An example of this is Replika, but we also don’t recommend visiting that app.

4) Also known as “griefbots”. We definitely don’t recommend those either.